What Is AI Hallucination? You asked ChatGPT a question. It gave you a confident, well-structured answer. You used it. Then someone pointed out — it was completely wrong.

That is AI hallucination. And it happens more often than most people realize.

If you use AI tools for work, research, or financial decisions — and you are based in India, the USA, or the UK — this is something you genuinely need to understand. Not in a technical way. In a practical, protect-yourself way.

By the end of this article, you will know exactly what AI hallucination is, why it happens, how to spot it before it costs you, and the mistakes people make when they trust AI blindly. No jargon. No fluff. Just what you actually need.

Table of Contents

What Is AI Hallucination

Here is the thing most people get wrong: they think AI “lies.” It does not. Lying requires intent. AI has no intent.

AI hallucination is when an AI model generates information that sounds correct but is factually wrong, fabricated, or simply does not exist. The AI is not being deceptive. It genuinely does not know it is wrong.

Think of it like this. AI language models — tools like ChatGPT, Gemini, or Copilot — are trained on enormous amounts of text. They learn patterns. They learn that certain words follow other words. They learn how an “answer” should sound. But they do not look things up in real time (unless specifically built to do so). They predict. And sometimes, that prediction is confidently, fluently wrong.

For example, an AI might cite a research paper that does not exist. It might quote a judge in a legal case that never happened. It might give you a medicine dosage that is dangerously off. All delivered in the same calm, authoritative tone as when it is completely accurate.

This is not a bug that will simply be patched away. It is a fundamental challenge rooted in how large language models work. In 2026, even the most advanced models still hallucinate. The frequency has improved. The risk has not disappeared.

So the question is not “does my AI hallucinate?” The question is: “When it does, will I catch it?”

Why does AI make things up

Understanding why hallucinations happen helps you predict when they are most likely to occur. Let’s be honest — most users never think about this, and that is exactly when mistakes happen.

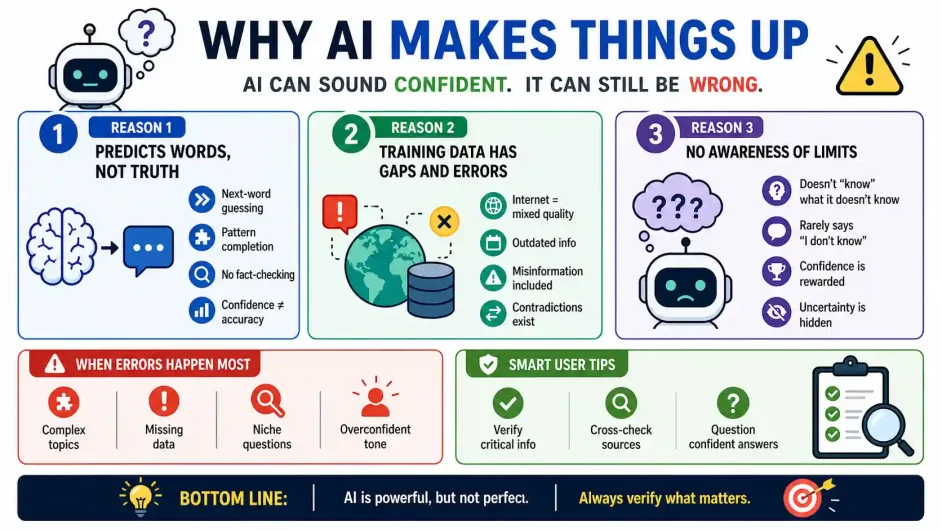

Reason 1: AI predicts words, not truth. A language model is trained to produce the most statistically likely next word or phrase. It is not searching a verified database. It is completing a pattern. When the pattern points toward a confident-sounding answer, the AI follows it — even into fiction.

Reason 2: Training data has gaps and errors. The internet, which most AI models are trained on, contains misinformation, outdated facts, and contradictions. The AI absorbs all of it without knowing what is reliable.

Reason 3: The model does not know what it does not know. This is the core problem. A well-designed AI should say “I am not sure.” But many models are trained in ways that reward confident-sounding answers, making them less likely to express uncertainty even when they should.

Here is a quick comparison of hallucination risk by task type:

| Task Type | Hallucination Risk | Why |

| General explanation (e.g., “What is inflation?”) | Low | High-volume, consistent training data |

| Recent news or events | High | May be outside training data cutoff |

| Citing specific sources, papers, or quotes | Very High | Models often fabricate citations |

| Legal or medical specifics | Very High | Precision required; errors are dangerous |

| Creative writing or summaries | Low | Accuracy is less critical |

The riskiest thing you can do is ask AI for a specific fact, a source, a name, a date, or a regulation — and trust the answer without checking.

How to Protect Yourself From AI Hallucinations in 2026

Practical steps. Real examples. No hand-waving.

Step 1: Treat every specific fact as unverified until you check it. If an AI tells you a tax rule, a court ruling, a drug interaction, or a company’s financial data — verify it against the original source. This is non-negotiable.

Step 2: Ask the AI to show its sources. It will not always get this right, but asking “Where can I verify this?” sometimes prompts more cautious, qualified responses. If it gives you a URL, check that the URL actually exists before using the information.

Step 3: Use AI for reasoning, not for facts. AI is genuinely excellent at explaining concepts, structuring your thinking, drafting content, and comparing frameworks. Use it for that. For raw facts — especially in finance, law, or medicine — go to the primary source. And when it comes to money management, check AI like ChatGPT for Personal Finance, it can help you model scenarios, test strategies, and challenge assumptions, giving you clarity before you act.

Step 4: Cross-reference with at least two other sources. If you read something on an AI tool and it seems important, spend 90 seconds confirming it on a government website, a verified news outlet, or an official database. In India, for regulatory or tax queries, always verify against SEBI, RBI, or the Income Tax Department’s website. In the UK, use gov.uk. In the USA, use .gov domains.

Step 5: Watch out for confident, specific-sounding answers. Hallucinations are most dangerous when they sound the most authoritative. If an AI gives you a very precise figure — “the penalty is $ 10,000” or “the 2026 amendment states…” — that precision is a signal to double-check, not a signal to trust more.

The irony is that vague AI answers are often safer. Specificity without a verifiable source is a red flag.

Real AI Hallucination Examples That Actually Happened

These are not edge cases. They are documented, real-world incidents where AI hallucination caused financial loss, professional damage, or public embarrassment.

- The lawyer who got fined $5,000. In 2023, a New York federal judge sanctioned lawyers who submitted a legal brief written by ChatGPT, which included citations of non-existent court cases. Attorneys Peter LoDuca and Steven Schwartz, along with their law firm, were each fined $5,000 and ordered to notify every judge falsely identified as the author of the fabricated rulings. The AI had invented case names, docket numbers, and legal opinions — all in fluent legal language. Source: CNBC

- The airline that lost in court over a chatbot’s wrong answer. In February 2024, a Canadian small claims tribunal ordered Air Canada to refund a traveller after its chatbot gave him incorrect information about bereavement fare policy. Air Canada’s defence — that the chatbot was “a separate legal entity responsible for its own actions” — was rejected outright. The court ruled the airline was fully liable for everything on its website, AI or not. Source: AI Business

- The newspaper that published fake books by real authors. In May 2025, newspapers including the Chicago Sun-Times and the Philadelphia Inquirer published a syndicated summer reading list where nine out of fifteen titles did not exist. Author Isabel Allende had no book called Tidewater Dreams, and Pulitzer Prize winner Percival Everett never wrote The Rainmakers — yet both were described in confident, detailed summaries. Source: NPR

- The AI that fabricated medical information. A 2024 study published in JAMA Pediatrics found that ChatGPT made incorrect diagnoses in over 80% of pediatric cases drawn from real-world scenarios, with 83% of its answers classified as diagnostic errors. Separately, an AI-powered drug interaction checker hallucinated potential drug interactions, causing physicians to unnecessarily avoid effective medication combinations. Sources: BioLife Health Center

- The fake quote attributed to a real person. In a study by the Columbia Journalism Review, ChatGPT falsely attributed 76% of 200 quotes from popular journalism sites that it was asked to identify. In only 7 out of 153 errors did the AI indicate any uncertainty to the user. In other words: wrong, confident, and silent about it. Source: Nielsen Norman Group

The pattern is the same every time: specific, confident, and wrong. And in each case, the damage landed on the human who used the output not on the AI that produced it.

Common Myths About AI Hallucination Most People Get Wrong

Let’s clear these up quickly.

Myth 1: “Newer AI models do not hallucinate anymore.” False. Newer models hallucinate less frequently. But they still do it — and sometimes, because they are more fluent and convincing, they are harder to catch.

Myth 2: “If the AI sounds confident, it must be right.” This is the most dangerous assumption. Confidence is a feature of how AI models are trained. It has no relationship to accuracy. An AI will state a fabricated legal case with the same tone as it explains basic arithmetic.

Myth 3: “I can just ask the AI if it’s sure.” When you ask “Are you sure?”, most AI models will often confirm or slightly soften the answer — not genuinely re-evaluate. You are still in a feedback loop with a pattern-predicting machine.

Myth 4: “Hallucination only affects obscure topics.” Hallucinations happen with common topics too. Specific figures, quotes, and citations are risky regardless of how well-known the general subject is. A model might correctly explain how a mutual fund works, then make up a completely fictional fund name as an example.

Myth 5: “It is the AI company’s problem, not mine.” Practically speaking, it is yours. The consequences of acting on wrong information land on you — not on the platform. Responsibility starts the moment you copy an AI answer into a report, a message, or a decision.

Here are the three things to take away from this article.

- AI hallucination is not a glitch — it is a structural feature of how language models work. It will not disappear completely, regardless of how advanced these tools become.

- The risk is highest with specifics: citations, legal rules, financial figures, dates, and medical details. Use AI to think; verify with primary sources for facts.

- Your best protection is habit, not technology. Build a simple rule: AI for reasoning, primary sources for facts. Make it automatic.

One action you can take today: the next time an AI gives you a specific number, law, or citation, spend 60 seconds checking it. That habit alone will protect you more than any AI safety feature.

Frequently Asked Questions (FAQ)

What is AI hallucination in simple terms?

AI hallucination is when an AI tool produces information that sounds correct but is actually wrong or made up. It happens because AI models predict likely-sounding responses rather than checking verified facts. The AI is not intentionally lying — it simply does not know the difference between what it invented and what is real.

Why does AI hallucinate even on well-known topics?

Even on familiar subjects, AI can hallucinate when asked for specific details like exact quotes, precise figures, or citations. The model may know the general concept well but fill in specifics by prediction rather than retrieval. This is why hallucinations often slip past readers who already understand the topic at a high level.

How can I tell if an AI is hallucinating?

Watch for specific-sounding claims — exact figures, named sources, quoted statistics, or regulatory details — and verify those independently. Hallucinations often involve information that cannot easily be traced back to a real, verifiable source. If a URL the AI cites does not exist, or a paper cannot be found in any database, it was likely fabricated.

Is AI hallucination dangerous for financial decisions?

Yes, particularly for anything involving specific tax rules, investment regulations, penalty amounts, or market data. In India, the USA, and the UK, financial regulations change frequently. An AI trained even months ago may give you outdated or fabricated regulatory details with complete confidence. Always verify financial and legal information with official government or regulatory sources.

Which AI tools hallucinate the least in 2026?

All major AI tools — including ChatGPT, Gemini, and Copilot — still hallucinate to some degree. Tools with real-time web search built in (like Perplexity AI or the web-browsing version of ChatGPT) hallucinate less on current-events queries because they retrieve live information. However, no tool is hallucination-free. The task type matters more than the tool — citation and specific-fact queries remain risky across all platforms.

Can AI hallucination affect robo-advisors and automated investment tools?

Yes, and this is a real risk most investors overlook. The best robo-advisors use AI to generate portfolio suggestions and market summaries and a hallucinated fund rating or wrong risk figure can quietly damage your returns. Fund owners and platform managers should continuously audit AI-generated responses, especially after market shifts or regulatory updates, to catch errors before they reach the investor. Always cross check AI-generated recommendations against official platform documentation.